How to Customize Webscale's Autoscaling

Webscale autoscaling has a diversity of rulesets used to scale dynamically. Webscale monitors the following metrics on each of the application servers and then uses the returned value in combination with a manually defined value over time to decide if and when to scale the cluster in/out:

- CPU % Utilization

- I/O Wait %

- Kilobytes/second

- Load Average

- Memory Usage %

- Requests/Second

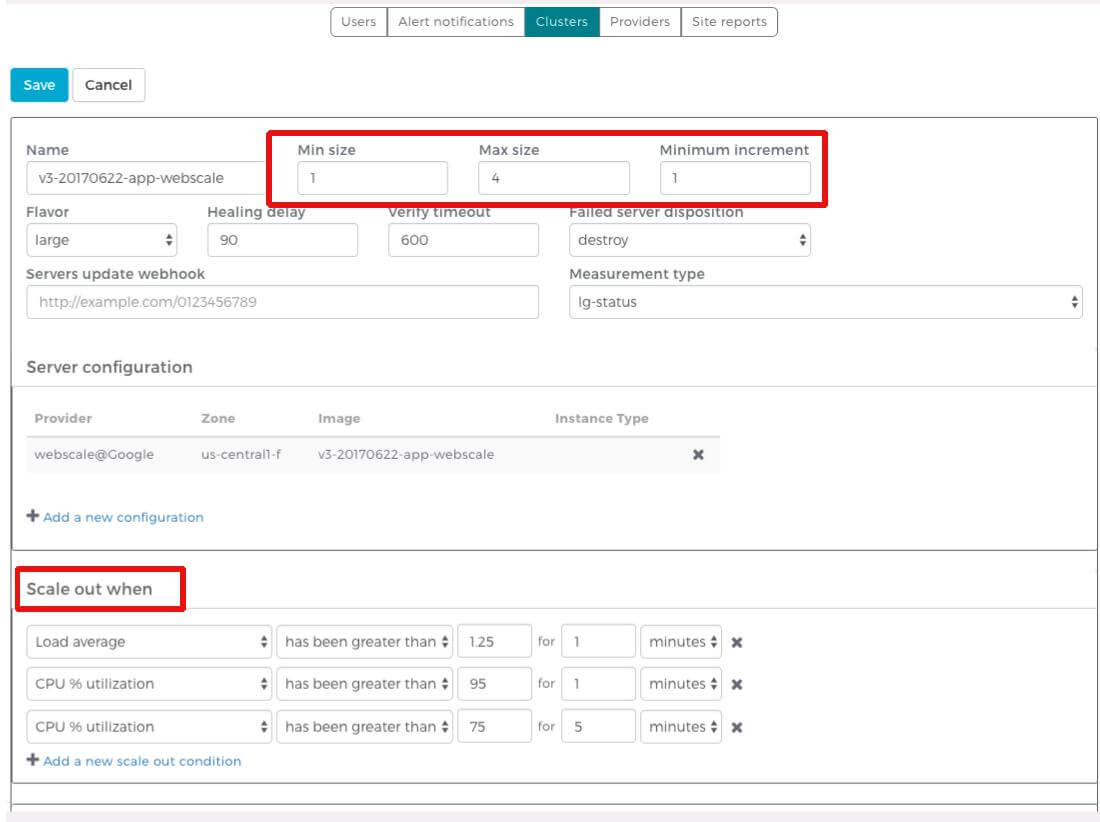

Some examples of autoscaling triggers are “Scale out when Load Average of the system has been greater than 1.25 for 1 minute”, or conversely, “Scale in when Load Average of the system has been less than 0.50 for 1 minute”.

The conditions you can utilize are:

- has been less than

- has been greater than

- will be less than

- will be greater than

Once one of these conditions is met, Webscale triggers the scale in/out. Define a minimum and maximum desired cluster size that limits these conditions. Set the minimum to what your contract defines for you, for example, 1. The max setting to use is one that is suitable to handle any surges of legitimate traffic while still within your cloud account quota limit. Set it to a reasonable max (for example, 10) to ensure buffer room for any traffic surges but also to keep it low enough so account expenses remain minimal in the case of, for example, unprecedented bot traffic hitting the site.

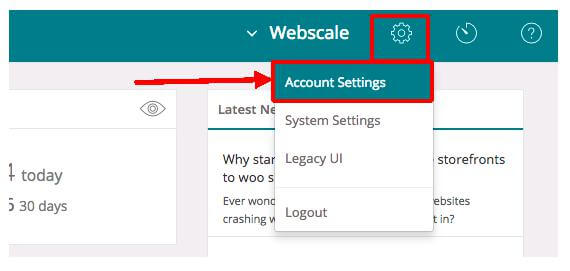

Find the cluster settings in the Account Settings section of the Webscale control panel.

Customize Autoscaling

| 1. Log in to the Webscale control panel and click the gear on the upper right, then select Account Settings from the menu. | |

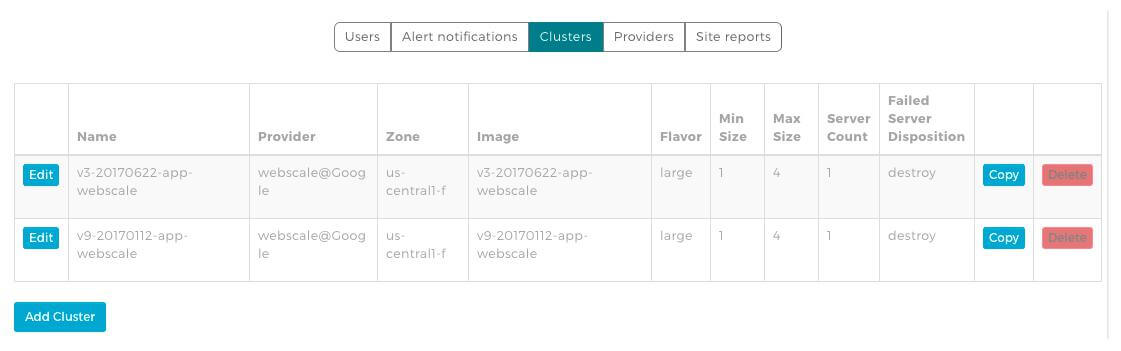

| 2. Click Clusters from the top menu bar to view the cluster information. Click the Edit button to view the details for that cluster. | |

| 3. Make your changes to the min and max server counts for the cluster and other details, including when the cluster scales out or in. Click the Save button at the bottom of the screen to confirm any changes made to the cluster settings. |

Further Reading

Have questions not answered here? Please Contact Support to get more help.

Feedback

Was this page helpful?

Glad to hear it! Have any more feedback? Please share it here.

Sorry to hear that. Have any more feedback? Please share it here.